The Voice Paradox: $11 Billion In, Nobody's Talking

Voice AI investment is exploding while voice assistant usage declines. But the real opportunity isn't voice-speaking machines. It's voice input with text output, and that technology just became ready.

In January 2025, ElevenLabs raised $180 million at a $3.3 billion valuation. By February 2026, the company reached an $11 billion valuation.

Meanwhile, Amazon's Alexa division has struggled to find its footing. Back in 2022, the division was on track to lose $10 billion, with insiders calling it a "colossal failure of imagination." Despite ongoing AI integration efforts, the fundamental challenges remain.

Both are voice AI. One is exploding. One never found product-market fit. The difference explains everything about what's actually coming.

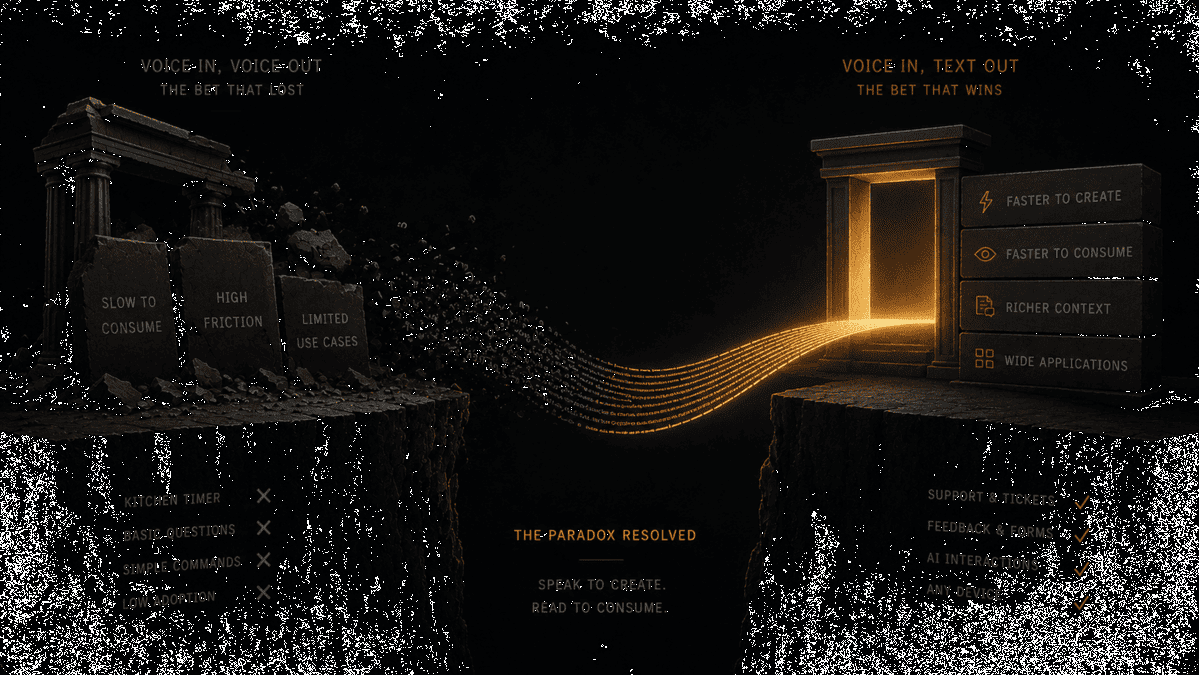

The Wrong Bet: Voice In, Voice Out

Alexa, Siri, and Google Assistant made a specific bet: humans would talk to machines, and machines would talk back. Voice in, voice out. The "Her" future where you have conversations with your computer.

This bet failed for two reasons nobody wants to admit.

First, the technology wasn't ready. Voice recognition in 2014 worked well enough for "set a timer for 10 minutes" but collapsed on anything complex. Accents, background noise, natural speech patterns, all of it degraded accuracy. Users learned quickly: simple commands work, anything else doesn't. So they stopped trying.

Second, and more fundamentally: voice output is slow.

You read at 250-300 words per minute. A voice agent speaks at 150. When Alexa tells you the weather, you have to sit there and listen. When you read the weather on your phone, you glance for half a second.

This asymmetry is brutal. Research shows humans absorb information from text dramatically faster than from speech. Voice assistants forced users to consume information at the slower rate. For anything beyond "what's 17 times 4," the experience was frustrating.

Users didn't consciously realize this. They just felt that voice assistants were annoying for anything important. They defaulted to primitive tasks: timers, alarms, basic questions. The technology that was supposed to transform how we interact with computers became a kitchen timer.

As observers on X have noted: despite a decade of "Her" predictions, voice-first interfaces never became popular. Could it be that humans just like using screens?

Yes. Screens are faster.

The Right Bet: Voice In, Text Out

The paradox resolves when you flip the output.

Voice input is genuinely faster than typing. Stanford research confirms speech operates at 150-160 words per minute; typing averages 40. When you're composing original thought (not just transcribing), the gap widens to 6x. Your brain evolved for speech over 300,000 years. It's been typing for 150.

Text output is genuinely faster to consume than voice. Reading beats listening. Skimming is impossible with audio. Searching for keywords requires text.

The optimal interface is obvious once you see it: speak to create, read to consume.

This isn't what Alexa built. Alexa talks back. The alternative is a system where you speak, AI transforms your speech into polished text, and you read the result. Voice in, text out.

The transformation in the middle is everything. Raw transcription produces messy output: filler words, false starts, rambling structures. But with LLMs, the output can be clean, formatted, and contextually appropriate. You dictate a rough thought; you get back a professional email. You ramble about three topics; you get back structured paragraphs. The AI handles organization so your brain doesn't have to.

Why This Moment Is Different

The technology stack for voice-to-text transformation just became production-ready in the last 18 months.

Transcription accuracy crossed 98% for most speakers in clean conditions. Real-world environments are harder: background noise, multiple speakers, and heavy accents still push error rates to 2-5% in challenging scenarios. But that's down from 15-20% five years ago. And crucially, LLMs can now clean up those remaining errors during the transformation step, turning imperfect transcription into polished output. The system doesn't need perfect input. It needs good-enough input plus intelligent processing.

Latency dropped below 500 milliseconds. LLMs became capable of restructuring messy speech into clean prose. The pieces exist. They work. The question is assembly.

Voice agents represented 22% of Y Combinator's H2 2024 class. The global voice AI infrastructure market is projected to grow from $5.4 billion in 2024 to $133.3 billion by 2034. Sequoia ranks it among their top five investment themes.

This isn't irrational exuberance about talking to computers. It's recognition that voice input, transformed into text output, solves real problems:

Every product that collects user input benefits. Support tickets become easier: click a button, describe your problem in 30 seconds, the system categorizes and formats it. Feedback forms become usable: speak your thoughts, get structured responses. Personalization flows work: describe your preferences naturally, the system extracts structured data. Nobody wants to type into forms. Everyone can talk for 20 seconds.

Every AI interaction becomes faster. Prompting ChatGPT, Claude, or any LLM requires text input. Typing complex prompts is slow. Speaking them is about 4x faster. As people interact with more AI tools daily, the input bottleneck becomes acute. Voice removes it.

Every device becomes a text creation tool. The same voice input works on your laptop, phone, tablet, watch. You speak the same way everywhere. The output is text you can use anywhere. Cross-platform consistency without learning different interfaces.

The Social Friction Solution

The obvious objection: "I can't talk to my computer at work. It's awkward."

Fair. But notice what's not awkward: sending voice messages on your phone. Recording videos. Taking calls. The social friction isn't about speaking. It's about speaking to a machine at your desk where others can see.

The solution is already in your pocket. Mobile apps let you dictate anywhere you're comfortable speaking, which is most places that aren't your open-plan office. A quick voice note in the hallway, the parking lot, or your kitchen. The text arrives wherever you need it.

For the truly paranoid, there are smartwatches. Speak to your wrist, text appears on your computer. The input happens privately. The output is ready when you return to your desk.

The "can't speak at work" problem is real but narrow. Most people can speak at home, in their car, while walking, while cooking. These are prime thinking times that keyboard-bound workflows waste entirely. Voice input captures them.

What Ubiquitous Voice-to-Text Looks Like

A joint study from LSE and Jabra predicts voice will become the mainstream way of working with generative AI by 2028. The research found 14% of knowledge workers already prefer voice over typing for AI interactions. In technology adoption models, 13.5% marks the transition from "early adopters" to "early majority," the inflection point where new interfaces go from niche to mainstream. We're just past that threshold, which historically means acceleration is coming.

Gen Alpha, those born after 2010, may never use keyboards as their primary input. Paul Sephton of Jabra told Fortune: "In the AI-powered workplace of the near future, the first draft of work will be spoken, not typed. Typing will serve only as an editing step, not a creative one."

The same study found trust in AI increases 33% when users interact through voice. Speaking feels like collaboration. Typing feels like commanding a machine.

What does this future look like in practice?

Support and feedback everywhere. Any product can add a voice input button. Users describe issues or preferences naturally. AI categorizes, formats, and routes. The support ticket or feedback form that nobody fills out becomes the 20-second voice note everyone actually uses.

Personalization through conversation. Instead of inferring user preferences through behavior tracking (creepy) or asking users to fill out settings (tedious), products let users describe what they want. "I like shorter emails, more direct, no filler." The voice input becomes structured preferences. Natural for users, useful for products.

Text creation without keyboards. Emails, documents, notes, messages. You speak the content, AI transforms it to match the context. Professional for clients, casual for friends, structured for reports. The cognitive load of formatting disappears. You focus on what to say, not how to type it.

AI interaction at the speed of thought. When you need to prompt multiple AI tools daily, typing becomes the bottleneck. Voice removes it. You speak your intent, the prompt appears, the AI responds. The back-and-forth that takes minutes with typing takes seconds with voice.

The Infrastructure Is Ready

At AICHE, we've spent the last year building exactly this: cross-platform voice-to-text with AI transformation. The same input works on Mac, Windows, Linux, iOS, Android, and Apple Watch. Local processing where possible for privacy. AI enhancement that turns raw speech into polished text, formatted for context.

The infrastructure is production-ready. We've built vendor-agnostic pipelines with automatic failover, so if one transcription service slows down, requests route to a faster one. Sub-second latency is achievable. Multi-language support is stable. The technical problems that made voice input frustrating five years ago are solved.

We're not alone in this bet. The investment flowing into voice AI infrastructure reflects a shared conviction: the interface layer is about to shift. Not to talking computers, but to speaking humans and reading humans, with AI transformation in between.

Alexa Will Come Back

Here's the optimistic ending for voice assistants: they'll succeed once they stop talking so much.

When Alexa becomes genuinely agentic (managing your calendar, ordering groceries, coordinating with other services) and delivers results as text you can quickly read and confirm, the value proposition changes. The problem was never voice input. It was voice output for information that's faster to read.

Future voice assistants will understand your spoken requests and respond with structured text, notifications, and completed actions you can verify at a glance. Voice in, text out, with agency in between.

The technology for this exists. The models are smart enough. The latency is low enough. What's missing is the interface paradigm shift: stop making users listen, start letting them read.

The Hypothesis

Here's what we believe will become obvious within five years:

Voice is the fastest input humans can produce. Text is the fastest input humans can consume. Any interface that respects both truths will outcompete interfaces that don't.

This applies to AI tools, productivity software, support systems, feedback collection, personalization flows, and any product that needs user input. The keyboard won't disappear, but it will become what the pen became: a specialized tool for specific tasks, not the default interface for text creation.

The voice paradox resolves when you separate input from output. Billions invested in voice-speaking machines failed because they forced slow output. Billions invested in voice-to-text transformation will succeed because they respect how humans actually process information.

Speak fast. Read faster. That's the future.